Microsoft’s gold AI rush, health apps’ data-sharing spree, Mozilla vs. trackers. AdGuard’s digest

In this edition of AdGuard’s digest: Microsoft sacks AI ethics team, mental health platforms leak data, Firefox expands anti-tracking protections, while Germans oppose chat control.

No ethics — no problem? Microsoft gets rid of AI ethics team

As Microsoft continues to integrate AI into its products at lightning speed, it has laid off an entire ‘ethics and society’ team that ensured it was doing so in a responsible way, The Platformer reported.

A former team member told Platformer that while Microsoft still has an Office of Responsible AI, regular employees often have no idea how to apply its principles to reality. The role of the ethics and society team, he said, was to explain what these principles mean in practice and to create rules. In a statement to Platformer, Microsoft denied any change in its approach to AI.

It’s understandable that Microsoft wants to win the AI race, in which it is currently outpacing Google, having just made its AI-powered search engine mode, Bing Chat, available to everyone. But if the dismissal of an ethics team signals that Microsoft is going to rush ahead with AI adoption no matter what, this could pose risks to users’ privacy and security. Also worrying are reports that Microsoft, in an attempt to monetize the success of the new Bing, is planning to insert contextual ads into the chatbot’s responses. Before taking such a step, Microsoft probably could have used input from the ethics team, but alas, it has already been sacked.

Better Help NOT: Mental health app shared user data with Facebook

Popular therapy app BetterHelp will have to pay $7.8 million to consumers for disclosing their sensitive mental health information to advertisers, such as Facebook and Snapchat, without consent. BetterHelp had repeatedly promised to its users not to share their private health information except for the purposes of therapy, the US Federal Trade Commission (FTC) said.

The company shared patients’ email addresses and the fact that they were attending therapy with Facebook, allowing the social media giant to “identify similar consumers and target them with advertisements,” FTC revealed. Allegations that BetterHelp was misusing user data go as far back as 2020, but the company kept denying them. In the future, BetterHelp would have to obtain explicit consent from users before sharing their personal information.

It’s disconcerting to see a platform that aims to help people through mental health crises betray their trust. This also shows that no matter what privacy claims an app makes, there is nothing technically stopping it from sharing the data it collects with third parties. To make sure you’re on the safe side, you can check a mental health app against Mozilla’s privacy guide. Spoiler: BetterHelp scored terribly in it.

3.1 million patients data leaked, the culprit — a tracking pixel

They say, when it rains it pours. Another mental health platform, Cerebral, has revealed that it was sending patient data to Facebook, Google, and TikTok from October 2019 until recently. Cerebral is a telehealth startup that specializes in the treatment of mental health problems such as depression, bipolar disorder, insomnia, and anxiety.

Facebook’s, Google’s, and TikTok’s tracking pixels were embedded in Cerebral online services which led to the personal data of more than 3.1 million people being exposed to third parties. The amount of information exposed about a person depended on what that person was doing on the platform. It could’ve ranged from name, phone number, email address, and IP address to appointment and treatment details, Cerebral admitted.

The company said it has since “disabled, reconfigured and/or removed” the pixels. Tracking pixels are tiny transparent images placed over online elements such as ads, web pages, and emails. They help marketers measure ad clicks and other metrics. The Cerebral case is yet another example of healthcare providers’ cavalier attitude to privacy. Such an attitude not only puts a healthcare provider into potential breach of patient data protection law, but could also cost its customers dearly if the data falls into the wrong hands.

One more reason to love Mozilla: Firefox steps up tracking protections

It’s not a secret that we have a soft spot for Mozilla: after all, it has just saved ad blockers from Chromacalypse. But credit is where credit is due. This time, Mozilla has done another good, having extended the protection against tracking cookies in its Firefox browser to Android.

The feature, known as Total Cookie Protection (TCP), blocks cross-site tracking by “hiding” third-party cookies from anyone except the site that planted them in the user’s browser. This means trackers cannot see each others’ cookies and show you annoying ads wherever you go. The feature became default for Firefox users on Windows, Mac, and Linux last year and has now finally arrived on mobile.

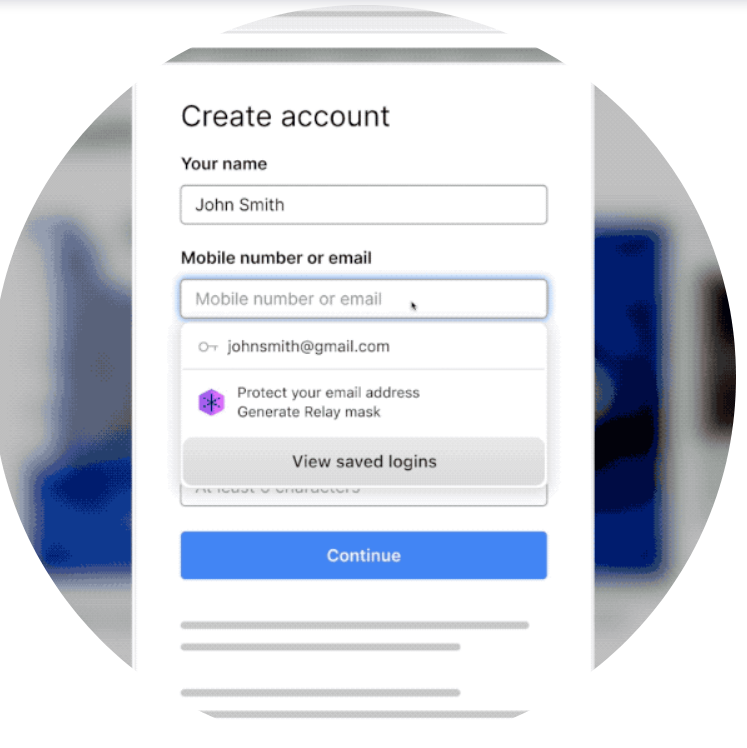

Mozilla also announced that its Firefox Relay service, which allows users to hide their real email address from trackers and spammers, is now available directly in the browser. On some sites, Firefox Relay users will automatically be prompted to sign in with a mask instead of their real email address.

Source: Mozilla

Mozilla has once again proved that it’s committed to privacy. Now Firefox users would be protected from tracking cookies irrespective of whether they use their computer to surf the web or their phone. What not to love about that!

Germany opposes EU Commission plan to scan messages client-side

The EU’s proposal to scan messages for child sexual abuse materials (CSAM) was met with widespread criticism at a special hearing at the German parliament. According to an official report on the meeting of the Bundestag’s Digital Committee the plan was described by particpants as technically unrealistic, “legally questionable” and an “immense threat to privacy.”

The experts said that the proposed law, which would require Meta, Apple, and other big tech companies to analyze the contents of people’s messages on their devices, would fail to stop the spread of CSAM material, but would only create “a massive surveillance infrastructure.” The proposed law makes tech companies responsible for finding and stopping not only known CSAM, which they could check against a database of prohibited content, but also new material and “grooming” in text messages. The experts also pointed out that with such a large-scale effort, even a one percent error rate could result in billions of false positives, meaning that innocent people may be accused.

We have raised similar concerns. The law, if passed, has the potential to erode EU privacy protections and undermine end-to-end encryption. As a major power with a voice in the Council of Europe, we hope that Berlin will raise these concerns at the EU level and try to prevent the law from being passed in its current form.